Handling Sparse Rewards in Reinforcement Learning Using Model Predictive Control

Authors:

M. Dawood, N. Dengler, J. de Heuvel, M. BennewitzType:

Conference ProceedingPublished in:

IEEE International Conference on Robotics & Automation (ICRA)Year:

2023Related Projects:

Embodied AI at LAMARR Institute for Machine Learning and Artificial IntelligenceLinks:

BibTex String

@InProceedings{dawood23icra,

author = {M. Dawood and N. Dengler and J. de Heuvel and M. Bennewitz},

title = {Handling Sparse Rewards in Reinforcement Learning Using Model Predictive Control},

booktitle = {Proc.~of the IEEE International Conference on Robotics \& Automation (ICRA)},

year = 2023

}

Abstract:

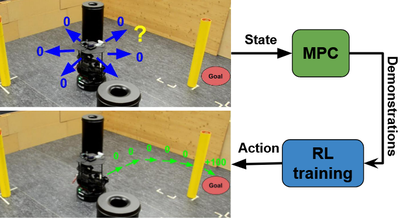

Reinforcement learning (RL) has recently proven great success in various domains. Yet, the design of the reward function requires detailed domain expertise and tedious fine-tuning to ensure that agents are able to learn the desired behaviour. Using a sparse reward conveniently mitigates these challenges. However, the sparse reward represents a challenge on its own, often resulting in unsuccessful training of the agent. In this paper, we therefore address the sparse reward problem in RL. Our goal is to find an effective alternative to reward shaping, without using costly human demonstrations, that would also be applicable to a wide range of domains. Hence, we propose to use model predictive control~(MPC) as an experience source for training RL agents in sparse reward environments. Without the need for reward shaping, we successfully apply our approach in the field of mobile robot navigation both in simulation and real-world experiments with a Kuboki Turtlebot 2. We furthermore demonstrate great improvement over pure RL algorithms in terms of success rate as well as number of collisions and timeouts. Our experiments show that MPC as an experience source improves the agent's learning process for a given task in the case of sparse rewards.